We are still in deep shock that a celebrity as big as Steve Harvey would state something that most other major black celebrities would never say. You would NEVER hear Oprah, Denzel, Will Smith or Eddie Murphy say what Harvey said about Racism in Hollywood. We’re not sure if Harvey’s statement is more shocking or the fact that The Hollywood Reporter, a magazine that has a black person on the cover once a decade actually PRINTED it. KUDOS to Steve. Here is a quote from a recent Hollywood Reporter story.”Hollywood is still very racist,” Harvey says. “Hollywood is more racist than America is. They put things on TV that they think the masses will like. Well, the masses have changed. The election of President Obama should prove that. And television should look entirely different. [Scandal star] Kerry Washington should not be the first African-American female to head up a drama series in 40 years. In 40 years! That’s crazy.”

We are still in deep shock that a celebrity as big as Steve Harvey would state something that most other major black celebrities would never say. You would NEVER hear Oprah, Denzel, Will Smith or Eddie Murphy say what Harvey said about Racism in Hollywood. We’re not sure if Harvey’s statement is more shocking or the fact that The Hollywood Reporter, a magazine that has a black person on the cover once a decade actually PRINTED it. KUDOS to Steve. Here is a quote from a recent Hollywood Reporter story.”Hollywood is still very racist,” Harvey says. “Hollywood is more racist than America is. They put things on TV that they think the masses will like. Well, the masses have changed. The election of President Obama should prove that. And television should look entirely different. [Scandal star] Kerry Washington should not be the first African-American female to head up a drama series in 40 years. In 40 years! That’s crazy.”

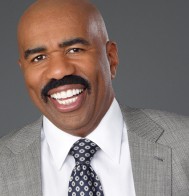

Steve Harvey Says Hollywood is More Racist than America

Subscribe

Comments are closed.

I would tend to agree, there is a lot of discrimination and prejudice that still is entrenched in the Entertainment industry and Hollywood in particular. I own an online radio station: http://www.1067thebridge.com. It’s an online radio station that plays old school R&B Music and Smooth Jazz and I’ve been told that “urban radio” isn’t as valuable as other formats and I even have prospective advertisers tell me that they would be fearful if too many Black patrons were to come into their stores. We still have to deal with this kind of mentality in 2013. Steve’s not lying, he’s just telling the truth were many others won’t.

If Steve doesn’t keep the truth to himself,he’s going to wind up like Arsenio. Remember when Arsenio had Louis Farrakhan on to state his side of things? Arsenio was GONE the next week.